Video Signage Analytics

Now let's have a look at how the analytics module integrates into the Chocolate app from the Video Signage tutorial. It's a non-interactive digital signage app that plays several different videos directly in the remote player.

- Repo: https://github.com/synamedia-senza/chocolate/

- Pull request: https://github.com/synamedia-senza/chocolate/pull/1/files

Clone the repo and switch to the analytics branch to follow along. As you saw from the previous example, the pull request shows the code that has changed when integrating the analytics module.

Setup

We'll call init() just like in the example above.

let config = await loadAnalyticsConfig();

await analytics.init("Chocolate", {

google: {gtag: config.googleAnalyticsId, debug: true},

ipdata: {apikey: config.ipDataAPIKey},

userInfo: {chocolate: "mmmmmmm"},

lifecycle: {raw: false, summary: true},

player: {raw: false, summary: true}

});

async loadAnalyticsConfig(url = "./config.json") {

try {

const res = await fetch(url, { cache: "no-store" });

if (!res.ok) return {};

return await res.json();

} catch {

console.warn("Analytics config.json not found");

return {};

}

}Tracking remote player events

This time, instead of using the Shaka Player and a video element, we'll play video in the Remote Player. We'll call the trackRemotePlayerEvents() function, which can access the singleton instance of the remote player from the SDK.

analytics.trackRemotePlayerEvents(getMetadata);Metadata

const descriptions = {

"chocolate0": "A stream of molten chocolate.",

"chocolate1": "Chunks of chocolate flying in the air.",

"chocolate2": "A few chunks of chocolate falling down.",

"chocolate3": "Chocolate shavings falling on a stack of chocolate.",

"chocolate4": "A scoop of small chunks of chocolate.",

"chocolate5": "Chocolate shavings falling in front of a big stack of chocolate.",

"chocolate6": "Just lots of chocolate shavings.",

"chocolate7": "More chocolate shavings falling on two stacks of chocolate.",

"chocolate8": "Tiny flakes falling on some big chunky pieces of chocolate.",

"chocolate9": "An epic explosion of chocoalte chunks!"

}

function getMetadata(ctx) {

const contentId = filename(ctx.url);

return {contentId, description: descriptions[contentId]};

}

function filename(url) {

const parts = url.split('/').pop() || '';

return parts.replace(/\.[^/.]+$/, '');

}This time, we'll pass in a function that is used to look up the metadata. It is passed a context object with the following properties:

url: the URL of the manifestplayer: the player objectmedia: the video media element

In this example, we'll simply extract the content ID from the url, use it to look up a description of the content, and include both in the response.

Logging events

The Chocolate app displays a chocolate themed word in a cursive font on the screen between the videos. We'll use the basic logging functionality of the analytics module to track which words are shown:

analytics.logEvent("word", {"word": word.innerHTML});This simply logs an event called word with metadata like {"word": "yummy"}.

Testing

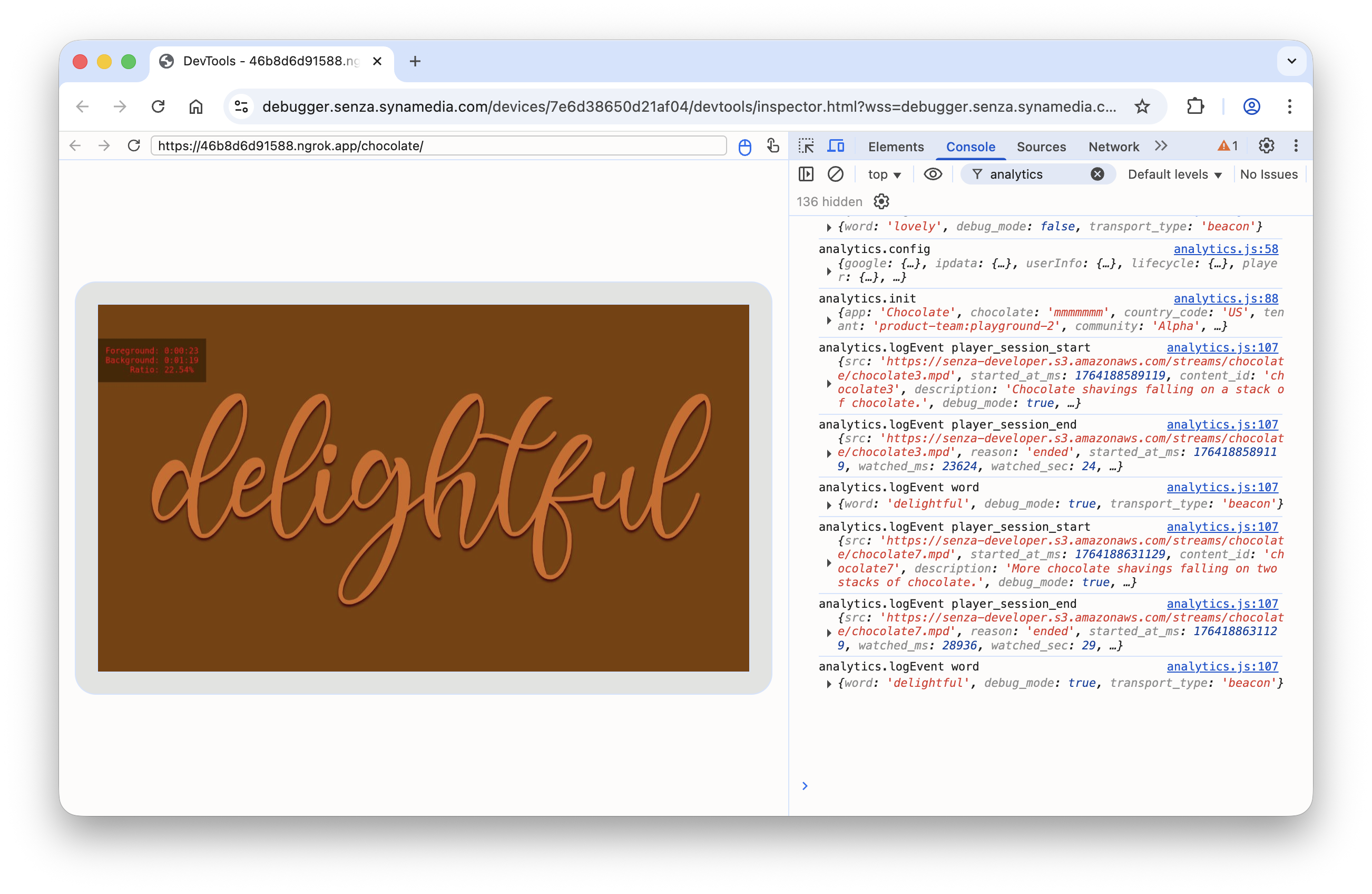

In this digital signage app, the script runs automatically as the app cycles between showing a word in foreground mode and playing a video in background mode. In the debugger, you can see the words that are shown and the details of the videos that are played.

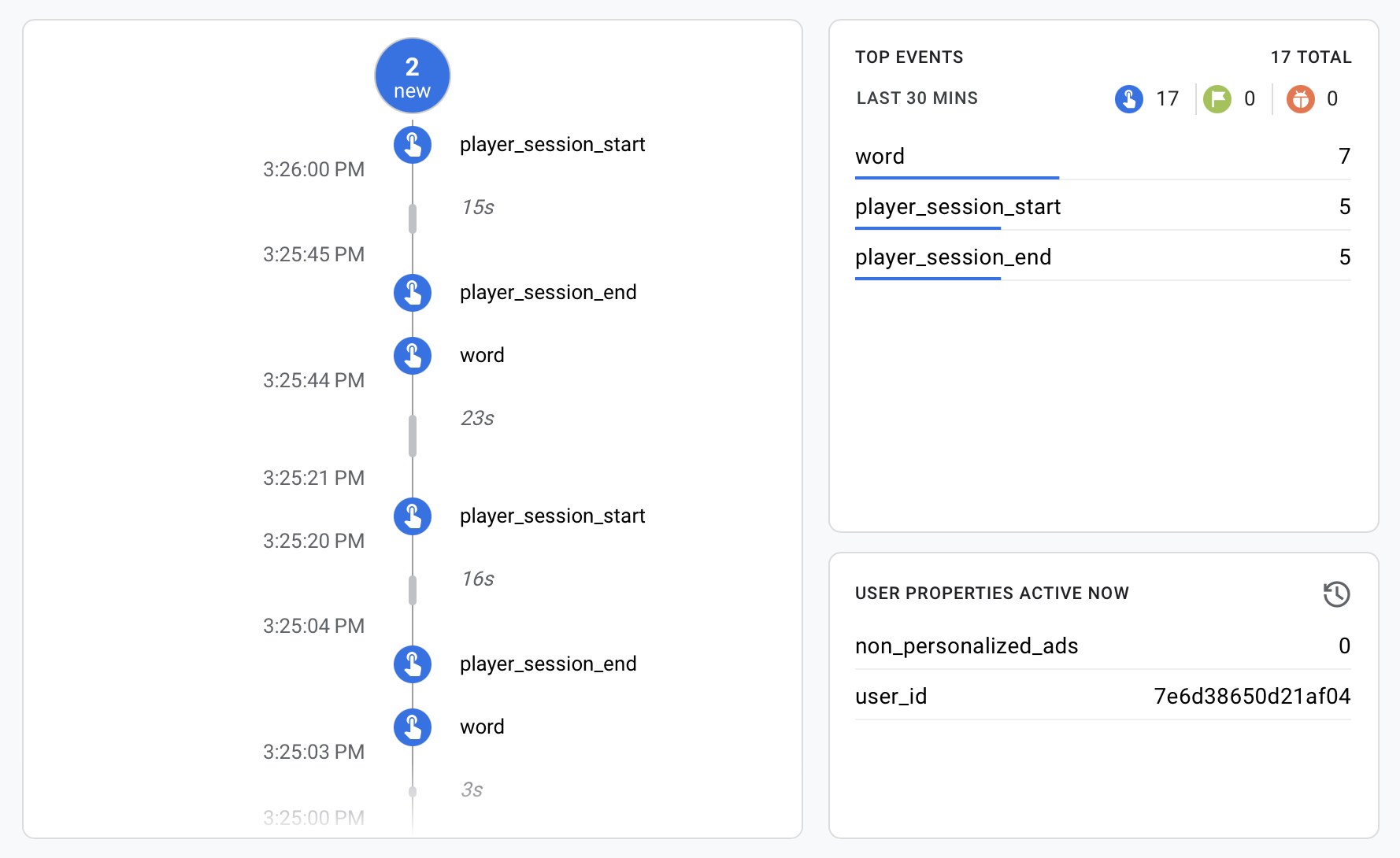

You can see the same events in GA4 in the timeline view.

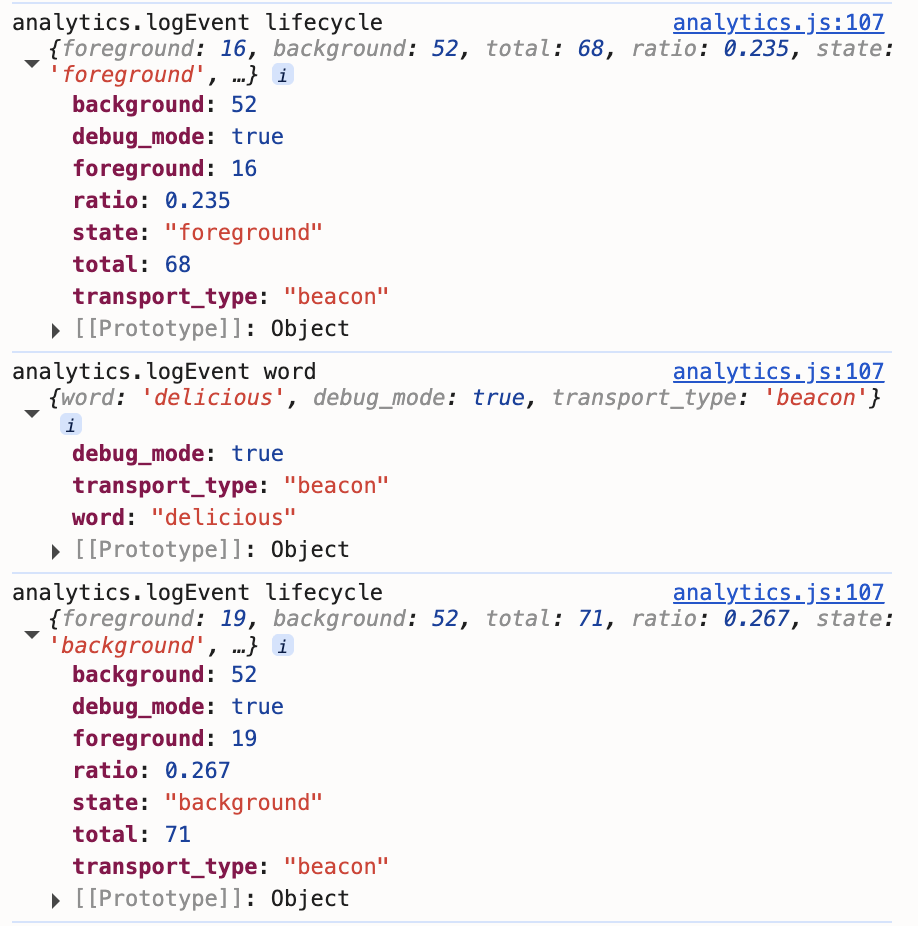

If you turn on lifecycle.raw, you'll see lifecycle events each time the app moves between foreground and background mode:

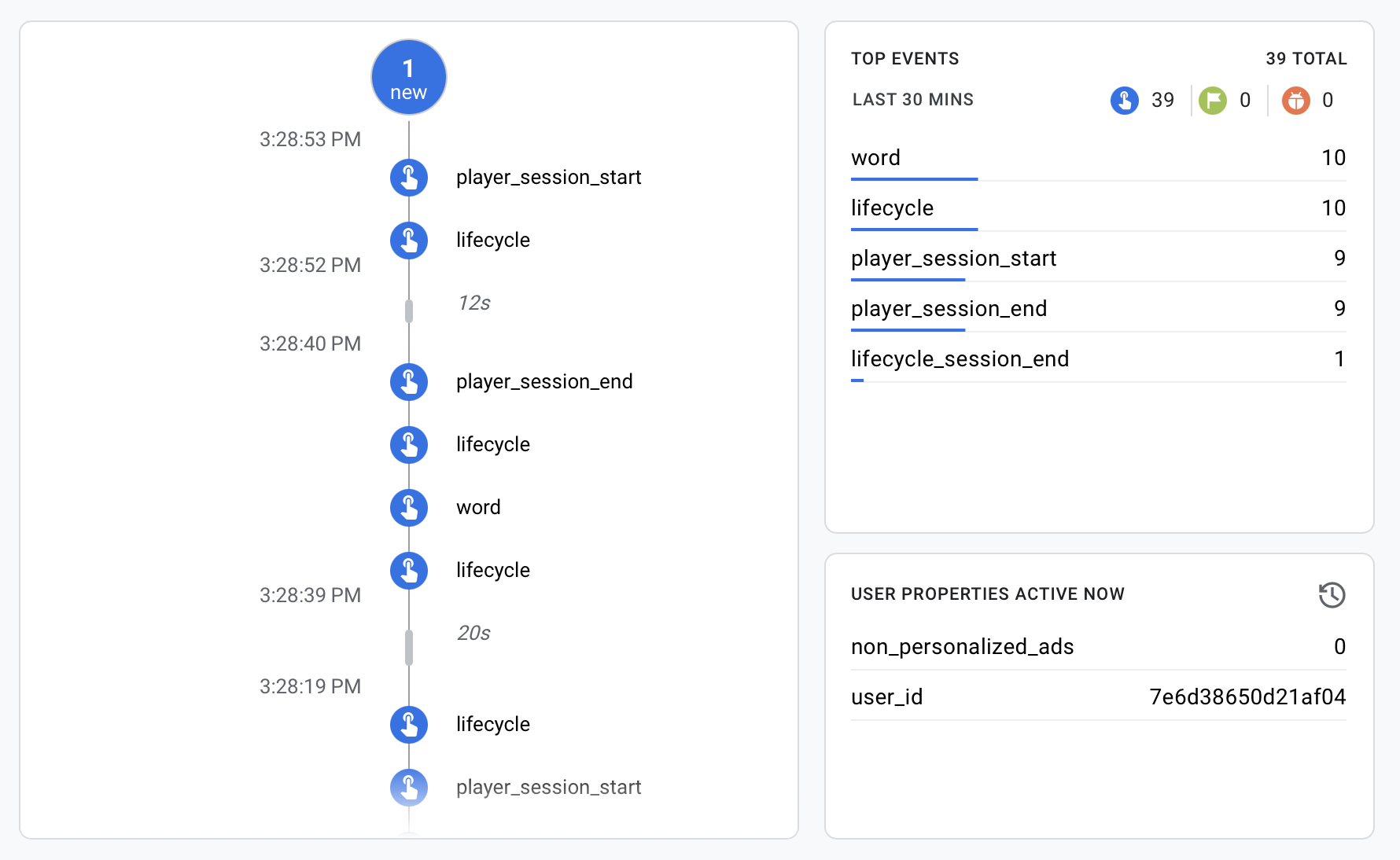

And the same in the timeline view:

Updated 12 days ago